What is CUDA Programming?

In order to take advantage of NVIDIA’s parallel computing technologies, you can use CUDA programming. Graphics processing units (GPUs) can benefit from the CUDA platform and application programming interface (API) (GPU). The interface is built on C/C++, but it allows you to integrate other programming languages and frameworks as well.

CUDA Programming Model

GPU instruction sets and parallel processing elements can be accessed directly via the CUDA platform’s API. If you prefer writing in C/C++, you can utilize CUDA’s C/C++ interface, but you are also free to use frameworks like OpenCL and HIP.

It is possible to build a scalar program in parallel using the CUDA programming model, as well as write a scalable program. Because of this, the CUDA compiler makes use of programming abstractions to take advantage of the parallelism that is already built-in.

Language enhancements CUDA programmers can make use of included CUDA blocks, shared memory, and barriers for synchronization. There are CUDA blocks that are made up of threads. As long as all threads in a block are executing at the same time, multiple threads can be paused.

A Code Example

An example of how CUDA kernel code adds and returns a vector is seen in the code below. The function is built to process scalars because there are two vectors being performed. For huge parallelism, use this code. It is possible to do parallel computations on the GPU, where each vector element is executed by a separate thread.

/** CUDA kernel code

* This code computes the vector addition of A and B into C. The three vectors have the same number of elements as numElements.

*/

__global__ void vectorAdd( float *A, float *B, float *C, int numElements) {

int i = blockDim.x * blockIdx.x + threadIdx.x;

if (i < numElements) {

C[i] = A[i] + B[i];

}

}

A CUDA Program’s Design and Implementation

By using the CUDA programming model, you may scale your applications to take use of more GPU processor cores as you require them to. Programming applications using CUDA language abstractions allows you to divide your programs into smaller, more manageable tasks.

Parallel threads in the CUDA block continue to run and cooperate, allowing you to break down larger problems into smaller bits of code without stopping other operations. There is no limit to how many multiprocessors can be used for CUDA, because the CUDA runtime sets the schedule and order of the CUDA blocks that are run on them.

Figure 1 displays a compilation of a CUDA program with eight CUDA blocks, which illustrates this procedure. CUDA demonstrates how it allocates blocks to streaming multiprocessors in the figure (SMs). There are four SMs on the tiny GPU and eight SMs on the larger one, thus each SM is allotted two blocks of CUDA. This sort of allocation allows you to guarantee performance scalability without having to change the source code.

CUDA Program Structure

Code instructions for GPU and CPU can be found in CUDA programs; the default C program includes one as well. CPUs are referred to as “hosts” and GPUs as “devices” in this hierarchy.

To get the best results, it is essential that you use multiple compilers. Here’s how you can get started:

- Traditional C compilers like GCC can be used to compile host code.

- Compilers are needed to make API functions understandable to devices. NVIDIA C Compiler (NVCC) can be used with NVIDIA GPUs.

In the NVCC compiler, host code can be processed separately from device code. CUDA keywords are used to do this. ‘Kernels’ are keywords used to identify data-parallel functions in the device code. These keywords are identified by an NVCC compiler, which then compiles the device code and runs it on the GPU.

CUDA C Program Execution

The amount of threads you start in a CUDA program is entirely up to you; there are no restrictions. However, it’s important that you do so carefully. Three-dimensional grids are created by arranging threads in blocks. A unique identifier is assigned to each thread, which determines which data is executed.

It is common for a GPU to have built-in global memory (DRAM) or device memory, which is a type of dynamic random access memory. Writing code that allocates distinct GPU memory is required to run a kernel on the GPU. The CUDA API provides a set of functions that can be used to accomplish this. Listed below are the steps in this sequence:

- The device’s RAM should be allocated.

- Use the device memory to transfer data from the host’s memory

- On the device, run the kernel.

- Return the outcome to the host memory from the device memory.

- The device’s allotted memory should be freed up.

- Data can be transferred from and into the device’s memory during this procedure. In spite of this, the gadget is unable to exchange data with the host.

CUDA Program Management

Two computers are needed to store the CUDA program structure: the host computer and a graphics processing unit (GPU). Using the C memory model, all of the storage processes have their own memory stack and heap. Data must be transferred from host to device in this manner.

The transfer of memory in some situations necessitates the creation of custom code. You can save time and money by not having to manually code anything if you’re using NVIDIA’s unified memory. This architecture allows you to prefetch and preallocate memory from CPUs and GPUs.

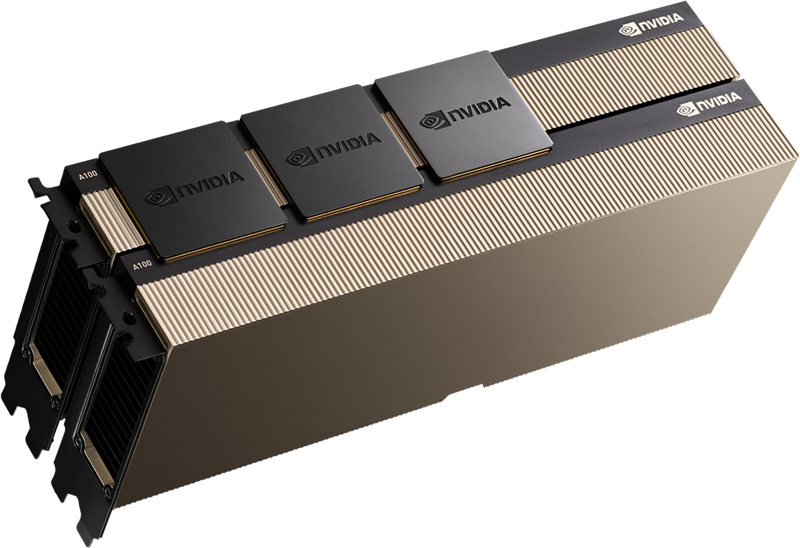

RENT A100 80GB GPU SERVER

GPU server equipped with Intel 24-Core Gold processor is ideal for AI and HPC.