Overview

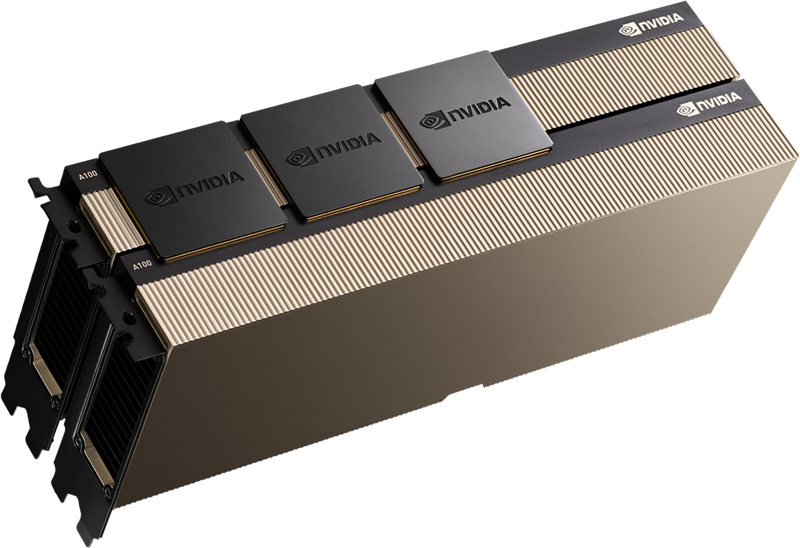

Data center-grade graphics processing units (GPUs) such as the NVIDIA A100 can be used by enterprises to develop large-scale machine learning infrastructures. Based on the Ampere GA100 GPU, it’s a dual-slot 10.5-inch PCI Express Gen4 card. Designed and tuned for deep learning workloads, A100 is the world’s fastest deep learning GPU on the market.

The A100 is available in two versions, one based on NVIDIA’s high-performance NVLink network architecture and the other on regular PCIe. It includes a choice of 40GB or 80GB of RAM.

It has the following features:

- Tensor Core 3—new TF32 format, 2.5x FP64 for HPC workloads, 20x INT8 for AI inference, and BF16 data format support.

- Doubles the memory capacity of the previous generation, with over 2TB per second of memory bandwidth.

- Multi-Instance GPUs (MIGs) each have 10 GB of RAM, and each instance has up to 7 isolated MIGs.

- With special support for sparse models, a 2x increase in performance over the previous version is achieved.

- GPU-to-GPU bandwidth can now reach 600 GB/s thanks to 3rd Generation NVLink and NVSwitch—upgraded network interconnects.

What are the specifications of the NVIDIA A100?

The A100 GPU is available in two varieties:

- NVIDIA A100 for NVLink – SXM 4/8 SXM on NVIDIA HGXTM A100 based on NVIDIA networking infrastructure tuned for best performance.

- NVIDIA A100 for PCIe – Conventional PCIe slots allow you to use the NVIDIA A100 on a wider range of systems.

The following features are available in both editions:

- Peak FP64 performance is 9.7 TF, whereas, for Tensor Cores, it is 19.5 TF.

- FP32’s maximum efficiency is 19.5 TF.

- On Tensor Cores*, FP16 (BFLOAT16), BFLOAT32 (TensorFloat32), and INT8 (TensorFloat8) have the highest peak performance.

- On Tensor Cores, INT4 reaches peak performance of 1,248 TOPS. For sparse workloads, the A100 delivers a two-fold increase in performance.

Each version has various memory and GPU specifications:

NVLink version – It has 40 or 80 GB of GPU memory, 1,555 or 2,039 GB/s of memory bandwidth, up to seven MIGs each containing five or ten gigabytes of memory, and a maximum power of 400 W.

PCIe version – Memory bandwidth of 1,555 GB/s, up to 7 MIGs each with 5 GB of memory, and a maximum power of 250 W are all included in the PCIe version.

Key Features of NVIDIA A100

3rd gen NVIDIA NVLink

The scalability, performance, and dependability of NVIDIA’s GPUs are all enhanced by its third-generation high-speed NVLink connectivity. NVIDIA’s latest NVSwitch and A100 GPUs include it. NVLink’s GPU-to-GPU communication bandwidth has been increased, and error detection and recovery have been improved thanks to the addition of links per GPU and NVSwitch.

The latest NVLink has a data throughput of 50 Gbit/sec per signal pair, which is twice as fast as the V100. In comparison, the bandwidth provided by a V100 NVLink is just 25 GB/sec, and only half the number of signal pairs is utilized by a V100. There are now 12 links instead of 6 in the V100, which provides a total bandwidth of 600 GB/sec instead of 300 GB/sec.

Support for NVIDIA Magnum and Mellanox Interconnects

InfiniBand and Ethernet interconnect solutions from NVIDIA and Mellanox are compatible with NVIDIA’s A100 Tensor Core GPU.

The Magnum IO API brings together file systems and storage, compute, and networking for multi-node accelerated systems. I/O can be accelerated for a wide range of tasks, including AI, analytics, and graphics processing via the CUDA-X interface.

Asynchronous Copy

With its groundbreaking asynchronous copy instruction, the A100 is redefining what a copy processor can do. While shared memory (SM) is doing other things, this is being done in the background.

Data can be loaded directly into SM from global memory using the A100 without needing an intermediate register file (RF). Reduced register bandwidth and better memory capacity are just a few benefits of using async-copy.

Asynchronous Barrier

A multi-threaded system necessitates the use of barriers to keep competing and overlapping activities apart. The A100 GPU provides special hardware acceleration for barriers and C++-conformant barrier objects in CUDA 11, which may be accessed using CUDA 11. This allows you to:

- Operations for asynchronous barriers can be divided into arrival and waiting.

- CUDA can be used to implement producer-consumer patterns.

- To go beyond warp/block, it is possible to synchronize CUDA threads at any degree of granularity.

Task Graph Acceleration

Faster implementation of new task graph paths is now possible because of A100’s newly added features.

CUDA task graphs, which allow define-once, run-repeatedly execution patterns, give a more efficient model for GPU work submission. Included in this are several actions linked by dependencies, such as kernel launches or memory copies.

Using predefined task graphs, several kernels can be launched in a single operation, enhancing the application’s performance and efficiency.

What is a Tensor Core?

There exist tensors, which are mathematical objects that describe the relationship between various other mathematical things. The most common way to represent them is as a multi-dimensional array of numbers.

When working with vector graphics, a lot of data must be transferred and processed. GPUs are well-suited for tensor processing because of their powerful parallel processing capabilities. General Matrix Multiplication (GEMM) is the most common mathematical operation done on tensors, and all modern GPUs now support it.

The NVIDIA Ampere architecture boosts GEMM performance, including:

- Increasing the number of GEMMs every cycle from 64 to 256

- New data formats can now be supported.

- Processing of sparse tensors in a short amount of time (tensors with a large number of zero values).

Users of the Volta and Turing CPUs have access to Tensor Cores. For Tensor Cores to work:

- A flag can be added to the source code to indicate the use of tensor cores (see some examples in the CUDA 9 documentation)

- Make sure your data is in a format that is supported.

- Matrix size must be greater than or equal to eight.

What are Multi-Instance GPUs (MIG)?

There are now seven GPU instances enabled by the Multi-Instance GPU (MIG) technology, which divides an A100 GPU into numerous ones. A separate set of RAM, cache, and multiprocessors run on each virtual GPU instance. According to NVIDIA, MIG can increase GPU server utilization by up to 7X in several cases.

It is possible to execute up to seven separate AI or HPC tasks simultaneously on an A100 GPU in MIG mode. This is particularly beneficial for AI inference tasks that do not use the full capabilities of contemporary GPUs.

You could, for instance, create:

- Two 20GB MIG instances and three 10GB instances apiece

- 3 MIG instances with 10GB each.

- 7 MIG instances with 5GB each.

Faults in one MIG instance do not influence other MIGs, ensuring the integrity of the entire system. Each instance provides assured quality of service (QoS) to ensure that your workload is delivered with the desired latency and throughput. Each instance provides assured quality of service (QoS).

MIG for training & Deployment

MIG allows up to seven operators to simultaneously access a dedicated GPU during the training stage, allowing for parallel tuning of numerous deep learning models. This gives outstanding performance for each operator as long as they do not use the A100 GPU’s full compute power for each work.

MIG allows you to run up to seven inference processes on a single GPU during the inference step. Small, low-latency models that don’t require full power can benefit significantly from this.

Users don’t have to alter their current CUDA programming model to use MIG for AI and HPC, and MIG is compatible with both Linux and Kubernetes.

An updated NVIDIA container runtime and additional Kubernetes resource types are available through device plugins on the NVIDIA A100, which incorporates MIG as part of its software.

Manage hypervisors like Red Hat Virtualization (RHV) and VMware vSphere (VMware vSphere) with MIG. Live migration and multi-tenancy are made possible by this.

What is NVIDIA DGX A100?

With 5 petaFLOPS of computational capacity in one device, DGX A100 is a powerful AI infrastructure server. AI workloads can be developed, tested, and deployed on a single platform.

Clusters of up to thousands of DGX A100 systems can be created. Add more DGX units and divide each A100 GPU into seven separate GPUs using MIG to expand your computing power. DGX A100 has eight NVIDIA A100 GPUs, each of which may be divided into seven smaller ones, resulting in a total of up to 56 discrete GPUs in a single DGX, each having its own memory, cache, and compute resources.

You can also run deep learning, machine learning, and high-performance computing applications using NVIDIA’s NGC Private Registry. The SDKs, trained models, and running scripts, as well as Helm charts for Kubernetes rapid deployment, are all included in each container.

RENT A100 80GB GPU SERVER

GPU server equipped with Intel 24-Core Gold processor is ideal for AI and HPC.