Introduction The Deep Learning Revolution and the Rise of GPU Servers GPU-powered deep-learning is a transformative technology. It is driving innovation in several areas. Deep learning is having a real-world impact, from personalized recommendations to medical diagnostics. At its core, deep learning involves training complex models on vast amounts of data. These models learn complex …

Category: Deep Learning

Understanding the Differences Between Artificial Intelligence (AI), Machine Learning (ML), and Deep Learning (DL)

Artificial intelligence (AI), machine learning (ML), and deep learning (DL) are all terms used in the technology world, but they have different meanings. AI is the broadest concept, encompassing any machine exhibiting intelligent behavior. Machine learning is a subfield of AI that allows computers to learn by identifying patterns in data without being explicitly programmed. …

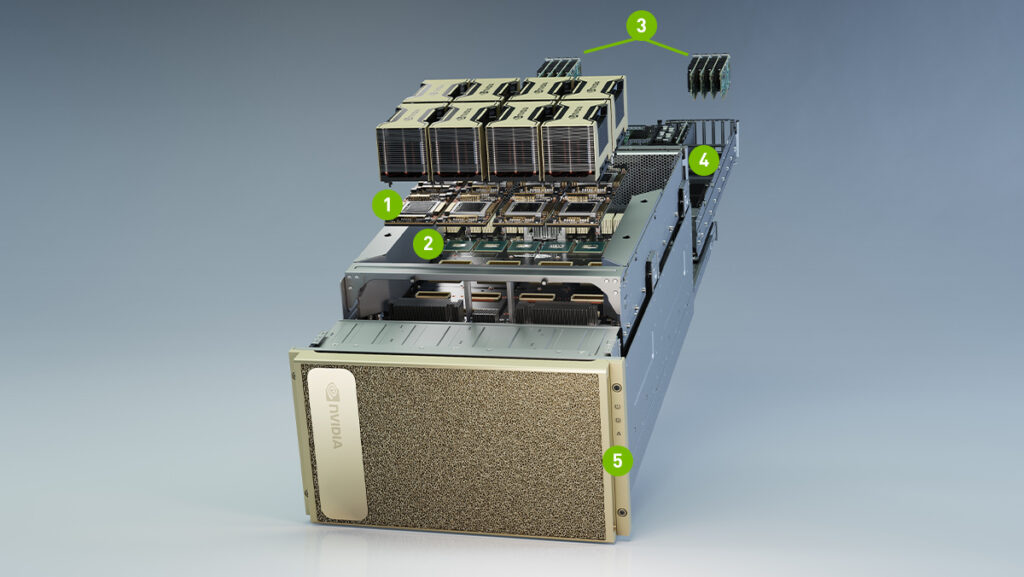

AI-Powered Supercomputing: Next Generation of Accelerated Efficiency

AI-powered supercomputing is a transformative force in both scientific research and industrial applications by enabling unprecedented computational power and intelligence. This new era of computing technology leverages artificial intelligence to solve complex problems, analyze large-scale data sets, and accelerate innovation in various domains. At SeiMaxim, we offer GPU servers featuring top-tier NVIDIA Ampere A100, RTX …

NVIDIA RTX 6000 Ada Generation Graphics Card

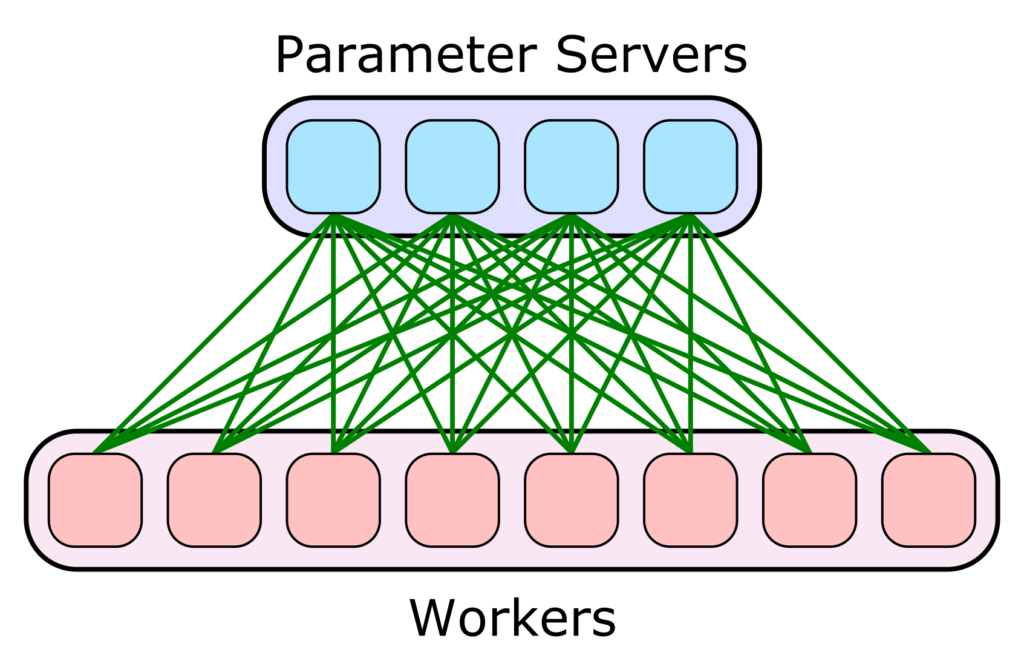

Distributed Training on Multiple GPUs

AI models trained at scale by data scientists or machine learning enthusiasts will inevitably reach a limit. As dataset sizes grow, processing times can increase from minutes to hours to days to weeks. Data scientists use distributed training for machine learning models and multiple GPU to speed up the development of complete AI models in …

Top 10 Deep Learning Frameworks

Deep learning is an area of AI and machine learning that uses unclassified data to classify images, computer vision, natural language processing (NLP), and other complex tasks. A neural network is called “deep” if it has at least three layers (one hidden layer). The network does deep learning on many hidden layers of computation. Whether …

How AI is Used by Researchers to Help Mitigate Misinformation?

Researchers tackling the challenge of visual misinformation — think the TikTok video of Tom Cruise supposedly golfing in Italy during the pandemic — must continuously advance their tools to identify AI-generated images. NVIDIA is furthering this effort by collaborating with researchers to develop and test detector algorithms on our state-of-the-art image-generation models. Crafting a dataset …

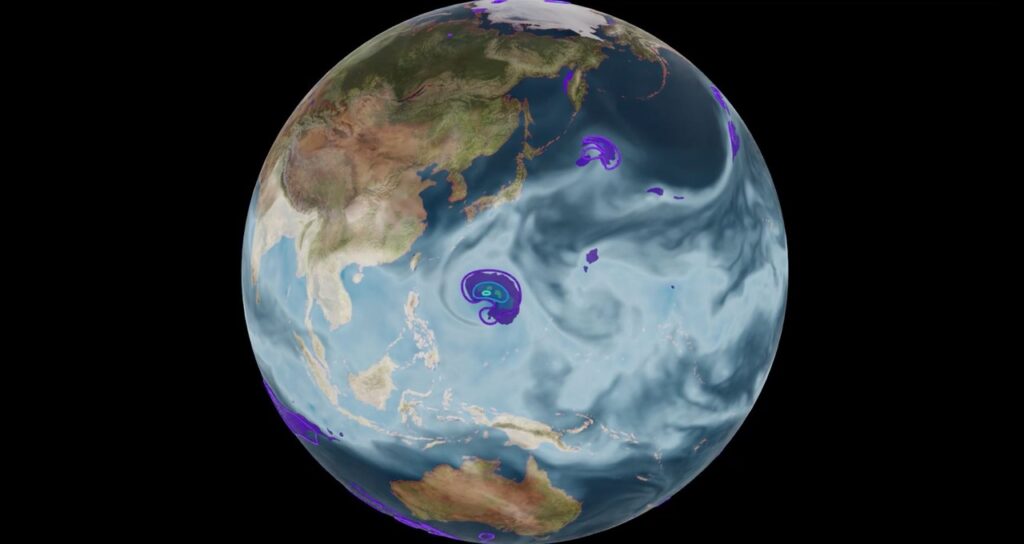

How Artificial Intelligence and Science Can Help Combat Climate Change?

In a 2009 New York Times opinion piece, Cornell University mathematician Steven Strogatz claimed that partial differential equations are “the most powerful tool humanity has ever created.” Anima Anandkumar, director of machine learning research at NVIDIA and professor of computing at the California Institute of Technology, delivered this quote at the GTC talk AI4Science: The …

The Best GPU for Deep Learning

Historically, the training phase of the deep learning pipeline has been the most time-consuming. Not only is this a time-consuming process, but it is also an expensive one. The most significant component of a deep learning pipeline is the human component — data scientists frequently wait hours or even days for training to complete, reducing …

Start-up problem when using A100 GPU in pass-through

A Compute node with four NVIDIA-A100 GPUs and one Mellanox InfiniBand adapter is part of an OpenStack Platform deployment. The compute node has been configured to provide GPUs in passthrough to the VMs. The schedule for creating a VM with the GPU in passthrough is successful; however, the VM creation failed on the first attempt. …

Why gaming GPU are not used for HPC?

Does using gaming GPUs for high-performance computing (HPC) make sense? It’s a yes and a no. Although gaming GPUs are significantly less expensive, they still have several drawbacks that make them unsuitable for high-performance computing (HPC) environments. Gamming GPUs come in all shapes and sizes, and it’s easy to see why when you look at …

What is Artificial Intelligence (AI)?

Artificial intelligence uses computers and machines to simulate the human mind’s problem-solving and decision-making abilities. John McCarthy’s concept of artificial intelligence (AI) in a 2004 paper is one of many definitions that have appeared over the past few decades, “It is a branch of engineering and research that deals with the creation of intelligent devices, …

What is CUDA Programming: Introduction and Examples

What is CUDA Programming? In order to take advantage of NVIDIA’s parallel computing technologies, you can use CUDA programming. Graphics processing units (GPUs) can benefit from the CUDA platform and application programming interface (API) (GPU). The interface is built on C/C++, but it allows you to integrate other programming languages and frameworks as well. CUDA …

The complete guide to NVIDIA A100

Overview Data center-grade graphics processing units (GPUs) such as the NVIDIA A100 can be used by enterprises to develop large-scale machine learning infrastructures. Based on the Ampere GA100 GPU, it’s a dual-slot 10.5-inch PCI Express Gen4 card. Designed and tuned for deep learning workloads, A100 is the world’s fastest deep learning GPU on the market. …

The world’s most advanced AI supercomputer has been installed in New Zealand

The University of Waikato has installed New Zealand’s most powerful supercomputer for AI applications as part of its goal to make New Zealand a global leader in AI research and development. The NVIDIA DGX A100 is the world’s most advanced system for running general AI tasks, and it is the first of its type in …