The NVIDIA A2 Tensor Core GPU enables low-power, small-footprint inference for NVIDIA AI at the edge. With a low-profile PCIe Gen4 card and a configurable thermal design power (TDP) of 40-60W, the A2 accelerates inference in any server for large-scale deployment.

Up to a 20-fold increase in inference performance

AI inference is used to improve consumer lives by providing intelligent, real-time experiences and by extracting insights from trillions of end-point sensors and cameras. In comparison to CPU-only servers, edge and entry-level servers equipped with NVIDIA A2 Tensor Core GPUs deliver up to 20x the performance of CPU-only servers, instantly upgrading any server to handle modern AI.

IVA Performance Enhancement for the Intelligent Edge

NVIDIA A2 GPU-equipped servers deliver up to 1.3X the performance in intelligent edge use cases such as smart cities, manufacturing, and retail. NVIDIA A2 GPUs running IVA workloads enable more efficient deployments with a price-performance ratio of up to 1.6X and a 10% increase in energy efficiency over previous GPU generations.

Optimized for Any Server

NVIDIA A2 is optimized for inference workloads and deployments in entry-level servers with space and thermal constraints, such as those found at 5G edge and industrial sites. A2 features a low-profile design and a low-power envelope, ranging from 60W to 40W, making it ideal for any server.

Leading Performance for AI Inference Across the Cloud, Data Center, and Edge

AI inference continues to drive disruptive innovation across a range of industries, including consumer internet, healthcare and life sciences, financial services, retail, manufacturing, and supercomputing. Together with the NVIDIA A100 and A30 Tensor Core GPUs, the A2’s small form factor and low power consumption enable a comprehensive AI inference portfolio across cloud, data center, and edge. A2 and the NVIDIA AI inference portfolio enable the deployment of AI applications with fewer servers and less power, resulting in significantly faster insights at a lower cost.

Ready for Enterprise Utilization

NVIDIA AI Enterprise, an end-to-end cloud-native suite of AI and data analytics software, has been certified for use with VMware vSphere in hypervisor-based virtual infrastructure. This enables the management and scaling of AI and inference workloads across multiple clouds.

NVIDIA-Certified Systems with NVIDIA A2 integrate compute acceleration and NVIDIA’s high-speed, secure networking into enterprise data center servers built and sold by NVIDIA’s OEM partners. This program enables customers to identify, acquire, and deploy systems from the NVIDIA NGCTM catalog for traditional and diverse modern AI applications on a single high-performance, cost-effective, and scalable infrastructure.

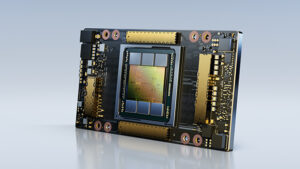

NVIDIA Ampere Architecture

The NVIDIA Ampere architecture is optimized for the elastic computing era, providing the performance and acceleration required by modern enterprise applications. Investigate the core of the world’s most performant and elastic data centers.

Technical Specifications

| Specs | Value |

|---|---|

| Peak FP32 | 4.5 TF |

| TF32 Tensor Core | 9 TF | 18 TF (With Sparsity) |

| BFLOAT16 Tensor Core | 18 TF | 36 TF (With Sparsity) |

| Peak FP16 Tensor Core | 18 TF | 36 TF (With Sparsity) |

| Peak INT8 Tensor Core | 36 TOPS | 72 TOPS (With Sparsity) |

| Peak INT4 Tensor Core | 72 TOPS | 144 TOPS (With Sparsity) |

| 4,000 teraFLOPS* | 2,000 teraFLOPS | 3,200 teraFLOPS* | 1,600 teraFLOPS |

| RT Cores | 10 |

| Media engines | video encoder 2 video decoders (includes AV1 decode) |

| GPU memory | 16GB GDDR6 |

| GPU memory bandwidth | 200GB/s |

| Interconnect | PCIe Gen4 x8 |

| Form factor | 1-slot, low-profile PCIe |

| Max thermal design power (TDP) | 40–60W (configurable) |

| Virtual GPU (vGPU) software support | NVIDIA Virtual PC (vPC), NVIDIA Virtual Applications (vApps), NVIDIA RTX Virtual Workstation (vWS), NVIDIA AI Enterprise, NVIDIA Virtual Compute Server (vCS) |