In order to power the world’s highest-performing elastic data centers for artificial intelligence (AI), data analytics, and high-performance computing (HPC) applications, the NVIDIA A100 80GB PCIe card provides unprecedented acceleration. NVIDIA A100 Tensor Core technology is capable of supporting a wide variety of math precisions, allowing a single accelerator to be used for virtually any computing job. It is possible can do double precision (FP64) computations, single-precision (FP32), half-precision (FP16), and integer (INT8) computations on the NVIDIA A100 80GB PCIe.

NVIDIA has doubled the memory of its A100 40GB PCIe datacenter GPU with its new A100 80GB version, which can help in solving more complex computing tasks in a wide variety of uses, from AI to scientific computing, data analytics, and ML research. The 80GB version of A100 was introduced not only to expand the total memory capacity of A100 but also to offer higher specs, increasing the memory clock speeds by an additional 34%.

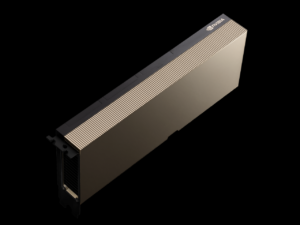

NVIDIA will continue to sell both the 40GB and the 80GB variants of the PCIe A100 card going forward. In general, this is an easy migration of the 80GB SMX A100 over to PCIe, with NVIDIA lowering the TDP and NVLinks to fit the form factor’s capabilities. As a second, higher-performing accelerator alternative for PCIe form factor customers, NVIDIA’s 80GB PCIe card is aimed at individuals who require more than 40GB of GPU memory.

Instead, NVIDIA has upgraded the card’s onboard memory with newer HBM2E memory. For the most recent version of the HBM2 memory standard, the informal moniker assigned to it is HBM2E, which was defined in February this year as 3.2Gbps/pin. Memory manufacturers have also been able to double the capacity of the memory, from 1GB/die to 2GB/die, thanks to manufacturing improvements. NVIDIA is making use of the fact that HBM2E has both more capacity and greater bandwidth, both of which HBM2E offers.

The redesigned PCIe A100 has a total of 80GB of memory thanks to the use of five 16GB, 8-Hi stacks. That works out to slightly under 1.9 terabytes per second of memory bandwidth for the accelerator, a 25% improvement over the 40 GB version of the accelerator. Because of this, not only does the 80GB accelerator provide more local storage, but it also provides some more memory bandwidth, which is unusual for a greater capacity model. Because of this, the 80GB version should be faster than the 40GB version in memory bandwidth-bound applications even if it does not use its additional memory capacity.

However, the increased power usage comes at a price: more memory. In order to meet the higher power consumption of the denser, higher frequency HBM2E stacks, NVIDIA has cranked things up to 300W. The fact that NVIDIA has long set the TDP limit for their PCIe compute accelerators at 250W, which is widely considered the limit for PCIe cooling, makes this a remarkable (though not overtly startling) change in TDPs. As a result, system integrators will have to find a way to offer an additional 50W of cooling per card in order to accommodate a 300W card. To be honest, this isn’t something I expect to be an issue for many designs, but I wouldn’t be shocked if certain integrators continue to provide only 40GB cards.

Even yet, the 80GB PCIe A100’s size factor appears to be a stumbling block. In comparison to the 80GB SXM A100’s 3.2Gbps memory clock, the 3.0Gbps clock is 7% lower. As a result, NVIDIA appears to be sacrificing some memory bandwidth in order to squeeze their card inside the larger 300W profile. As a side note, it appears that NVIDIA has not modified the PCIe A100’s form factor. Dual 8-pin PCIe power connectors supply power to the card, which is optimized for use in servers with strong chassis fans.

The new 80GB PCIe card is expected to perform similarly to the 40GB ones in terms of overall performance. This time around, NVIDIA’s new A100 datasheet doesn’t offer any official information regarding how the PCIe card compares to the SXM card, so we don’t know how the PCIe card will perform. The real-world performance of the 80GB PCIe card should be similar to that of the 40GB PCIe card, given the persistent TDP variances (300W vs 400W+). This just goes to show that, in this day and age of TDP-restricted hardware, GPU clock speed isn’t everything.

A100 80GB PCIe A100 is aimed at the same wide use cases as the SXM A100 (AI data volumes and larger Multi-Instance GPU (MIG) instances) in terms of its target audience. There are several AI tasks that can benefit from having a larger dataset, and GPU memory capacity has frequently been a hurdle in this sector because there is always someone who could use more memory. When running at full 7 instances, each can now have up to 10GB of dedicated memory thanks to NVIDIA’s MIG technology, which was first launched on the A100, which benefits from the memory increase.

NVIDIA isn’t providing any pricing or availability details today so that winds up the situation. The 80GB PCIe A100 cards, on the other hand, are expected to be available soon.

Rent A100 80GB GPU Server

GPU server equipped with Intel 24-Core Gold processor is ideal for AI and HPC.